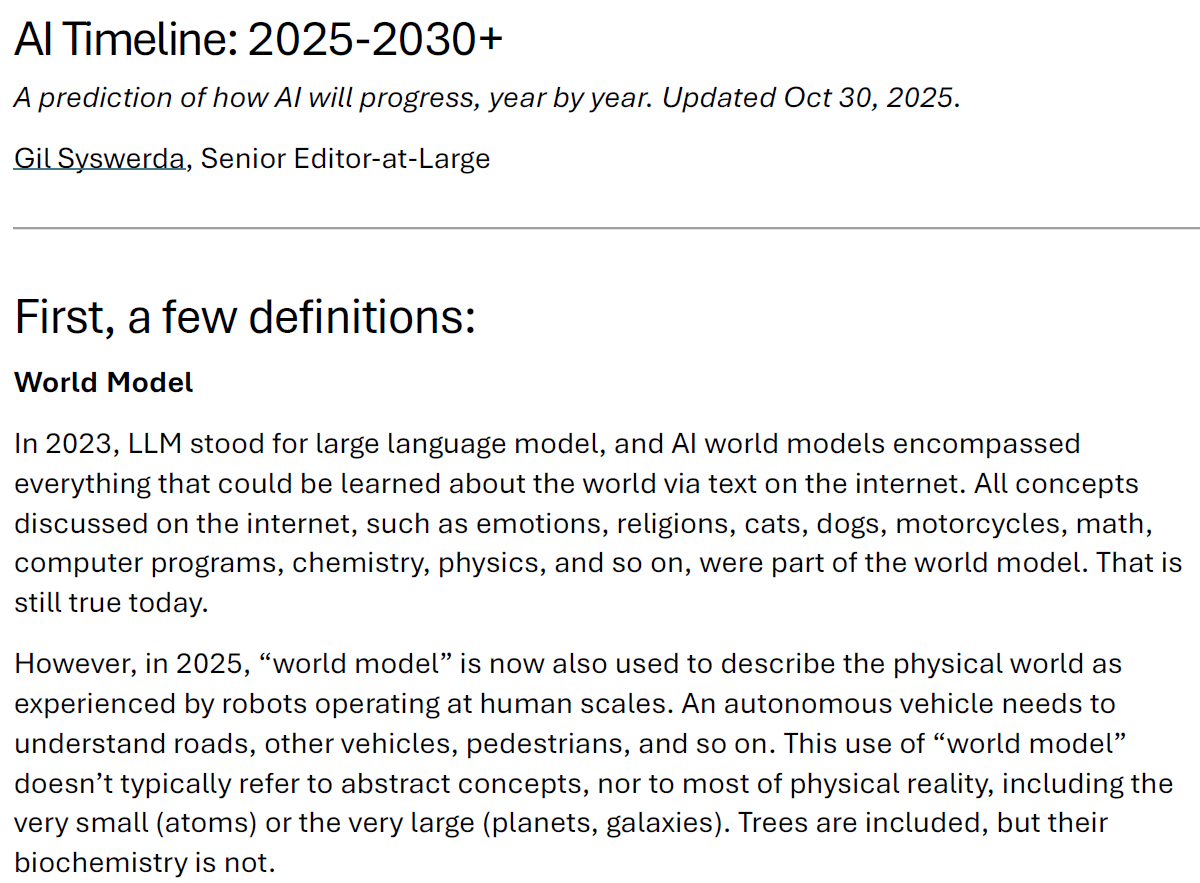

Dario Amodei, The Adolescence of Technology: Confronting and Overcoming the Risks of Powerful AI

Anthropic CEO Dario Amodei envisions a 'country of geniuses in a datacenter' with these five enabling properties:1) smarter than top humans across most domains, 2) able to act autonomously over long horizons, 3) use digital tools, 4) coordinate many copies, and 5) operate at much higher speed than humans. You could use these as a checlist to develop such a system, as Anthropic probably does. #5 is true in some domains (real-time learning being a counter-example), #3 is well in progress, and #1, #2, and #4 are still significant obstacles. He does not mention, e.g., robust generalization, abstraction, and world models. The essay discusses risks, governance, and economic implications. The essay’s overall thesis is: AI’s upside remains enormous, but humanity must treat the next few years as a civilizational test requiring technical alignment work, pragmatic regulation, geopolitical realism, economic adaptation, and moral seriousness.