Responsible Agentic Reasoning and AI Agents: A Critical Survey

Proposal for Safe Agentic AI via Responsible Reasoning AI Agents (R2A2)

DOI:

https://doi.org/10.70777/si.v2i6.16169Abstract

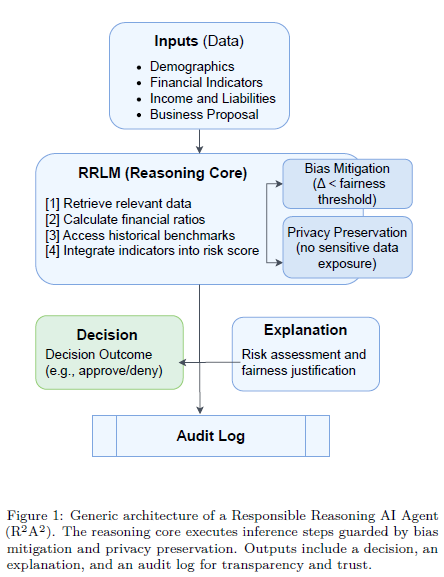

Information fusion for trustworthy AI is entering a pivotal stage, where Large Language Model (LLM)-based agents excel at integrating multi-source knowledge into coherent reasoning chains. However, these agents remain opaque and difficult to audit in the absence of embedded, in-loop safety mechanisms. Existing surveys treat reasoning, agentic behavior, and safety in isolation, leaving a gap in how to integrate them into practical, trustworthy agents. To address this, we present a survey at the intersection of these domains and introduce Responsible Reasoning AI Agents (R2A2), a class of agentic LLM systems that generate explicit reasoning traces while enforcing fairness, privacy, transparency, accountability, and auditability throughout the decision loop. We synthesize recent advances in chain-of-thought prompting, ReAct, tree/graph-of-thought structures, tool use, memory, retrieval, and agentic browsing, and integrate these with responsible AI principles into a unified evaluation framework. Furthermore, we propose an evaluation methodology for agentic reasoning with embedded safety mechanisms and outline a five-stage reproducible protocol: Curate, Unify, Probe, Benchmark, Analyze, to operationalize responsibility metrics. Overall, this taxonomy, metric suite, and framework advance the development of safe, transparent, and governable LLM-based agents. The project repository is available on GitHub § https://github.com/shainarazavi/Responsible-reasoning-agents.

Downloads

Published

How to Cite

Issue

Section

Categories

License

Copyright (c) 2025 Shaina Raza, Ranjan Sapkota, Manoj Karkee, Christos Emmanouilidis

This work is licensed under a Creative Commons Attribution-NoDerivatives 4.0 International License.